Basic Introduction

In the digital age, designers and developers frequently need to convert image assets into editable design drafts. With the development of artificial intelligence technology, this process can now be automated by AI, greatly improving work efficiency. This article will provide a detailed introduction on how to use AI technology to convert images into design drafts, including AI recognition, intelligent design draft generation, application scenarios, and recommendations for related Figma plugins.

AI Recognition

AI recognition is the core step in converting image content into Figma design drafts. It refers to the use of artificial intelligence algorithms, such as machine learning and deep learning, to identify and understand the content of images. This involves multiple sub-tasks, including image segmentation, object recognition, and text recognition (OCR). Below, we will detail the processes and technical implementations of these sub-tasks.

AI Recognition Process Flowchart

Below is the AI recognition process flowchart for converting images into Figma design drafts:

Image Input

│

├───> Image Segmentation ────> Identify Element Boundaries

│

├───> Object Recognition ────> Match Design Elements

│

└───> Text Recognition ────> Extract and Process Text

│

└───> Figma Design Draft GenerationThrough the above process, AI can effectively convert the design elements and text in images into corresponding elements in Figma design drafts. This process greatly simplifies the work of designers and improves the efficiency and accuracy of design.

Image Segmentation

Image segmentation is the process of identifying and separating each individual element within an image. This is typically achieved through deep learning techniques such as Convolutional Neural Networks (CNNs). A popular network architecture for this purpose is U-Net, which is particularly well-suited for image segmentation tasks.

Technical Implementation:

- Data Preparation: Collect a large number of annotated images of design elements, with annotations including the boundaries of each element.

- Model Training: Train a model using the U-Net architecture to enable it to recognize different design elements.

- Segmentation Application: Apply the trained model to new images to output the precise location and boundaries of each element.

Code Example (using Python and TensorFlow):

import tensorflow as tf

from tensorflow.keras.layers import Input, Conv2D, MaxPooling2D, UpSampling2D, concatenate

from tensorflow.keras.models import Model

def unet_model(input_size=(256, 256, 3)):

inputs = Input(input_size)

# U-Net architecture

# ... (specific U-Net construction code omitted)

outputs = Conv2D(1, (1, 1), activation='sigmoid')(conv9)

model = Model(inputs=inputs, outputs=outputs)

model.compile(optimizer='adam', loss='binary_crossentropy', metrics=['accuracy'])

return model

# Code to load the dataset, train the model, and apply the model would be implemented hereObject Recognition

Object recognition refers to identifying specific objects within an image, such as buttons, icons, etc., and matching them with a predefined library of design elements.

Technical Implementation:

- Data Preparation: Create a dataset containing various design elements and their category labels.

- Model Training: Use pre-trained CNN models such as ResNet or Inception for transfer learning to recognize different design elements.

- Object Matching: Match the identified objects with elements from the design element library to reconstruct them in Figma.

Code Example (using Python and TensorFlow):

from tensorflow.keras.applications.resnet50 import ResNet50, preprocess_input

from tensorflow.keras.preprocessing.image import ImageDataGenerator

from tensorflow.keras.layers import Dense, GlobalAveragePooling2D

from tensorflow.keras.models import Model

# Load the pre-trained ResNet50 model

base_model = ResNet50(weights='imagenet', include_top=False)

# Add custom layers

x = base_model.output

x = GlobalAveragePooling2D()(x)

x = Dense(1024, activation='relu')(x)

predictions = Dense(num_classes, activation='softmax')(x)

# Construct the final model

model = Model(inputs=base_model.input, outputs=predictions)

# Freeze the layers of ResNet50

for layer in base_model.layers:

layer.trainable = False

# Compile the model

model.compile(optimizer='rmsprop', loss='categorical_crossentropy')

# Code to train the model would be implemented hereText Recognition (OCR)

Text recognition (OCR) technology is used to extract text from images and convert it into an editable text format.

Technical Implementation:

- Use OCR tools (such as Tesseract) to recognize text within images.

- Perform post-processing on the recognized text, including language correction and format adjustment.

- Import the processed text into the Figma design draft.

Code Example (using Python and Tesseract):

import pytesseract

from PIL import Image

# Configure Tesseract path

pytesseract.pytesseract.tesseract_cmd = r'path_to_tesseract'

# Load image

image = Image.open('example.png')

# Apply OCR

text = pytesseract.image_to_string(image, lang='eng')

# Output the recognized text

print(text)

# Code to import the recognized text into Figma would be implemented hereFigma Design Draft Generation

Converting an image into a Figma design draft involves reconstructing the elements recognized by AI into objects in Figma, and applying the corresponding styles and layouts. This process can be divided into several key steps: design element reconstruction, style matching, and layout automation.

Figma Design Draft Generation Flowchart

Below is the flowchart for converting images into Figma design drafts:

AI Recognition Results

│

├───> Design Element Reconstruction ──> Create Figma shape/text elements

│ │

│ └───> Set size and position

│

├───> Style Matching ───────────────> Apply styles such as color, font, etc.

│

└───> Layout Automation ────────────> Set element constraints and layout gridsThrough the above process, we can convert the elements and style information recognized by AI into design drafts in Figma.

Design Element Reconstruction

In the AI recognition phase, we have already obtained the boundaries and categories of each element in the image. Now, we need to reconstruct these elements in Figma.

Technical Implementation:

- Use the Figma API to create corresponding shapes and text elements.

- Set the size and position of the elements based on the information recognized by AI.

- If the element is text, also set the font, size, and color.

Code Example (using Figma REST API):

// Assume we already have information about an element, including type, position, size, and style

const elementInfo = {

type: 'rectangle',

x: 100,

y: 50,

width: 200,

height: 100,

fill: '#FF5733'

};

// Use the fetch API to call Figma's REST API to create a rectangle

fetch('https://api.figma.com/v1/files/:file_key/nodes', {

method: 'POST',

headers: {

'X-Figma-Token': 'YOUR_PERSONAL_ACCESS_TOKEN'

},

body: JSON.stringify({

nodes: [

{

type: 'RECTANGLE',

x: elementInfo.x,

y: elementInfo.y,

width: elementInfo.width,

height: elementInfo.height,

fills: [{ type: 'SOLID', color: elementInfo.fill }]

}

]

})

})

.then(response => response.json())

.then(data => console.log(data))

.catch(error => console.error('Error:', error));Style Matching

Style matching refers to applying the style information recognized by AI to Figma elements, including color, margins, shadows, etc.

Technical Implementation:

- Parse the style data recognized by AI.

- Use the Figma API to update the style properties of the elements.

Code Example (continuing to use Figma REST API):

// Assume we already have the style information

const styleInfo = {

color: { r: 255, g: 87, b: 51 },

fontSize: 16,

fontFamily: 'Roboto',

fontWeight: 400

};

// Update the style of a text element

fetch('https://api.figma.com/v1/files/:file_key/nodes/:node_id', {

method: 'PUT',

headers: {

'X-Figma-Token': 'YOUR_PERSONAL_ACCESS_TOKEN'

},

body: JSON.stringify({

nodes: [

{

type: 'TEXT',

characters: 'Example Text',

style: {

fontFamily: styleInfo.fontFamily,

fontWeight: styleInfo.fontWeight,

fontSize: styleInfo.fontSize,

fills: [{ type: 'SOLID', color: styleInfo.color }]

}

}

]

})

})

.then(response => response.json())

.then(data => console.log(data))

.catch(error => console.error('Error:', error));Intelligent Layout

Intelligent layout refers to the smart arrangement of elements based on their relative positional relationships in Figma.

Technical Implementation:

- Analyze the spatial relationships between elements.

- Use the Figma API to set constraints and layout grids for the elements.

Code Example (using Figma Plugin API):

// Assume we already have the spatial relationships between elements

const layoutInfo = {

parentFrame: 'Frame_1',

childElements: ['Rectangle_1', 'Text_1']

};

// Set constraints for elements within a Figma plugin

const parentFrame = figma.getNodeById(layoutInfo.parentFrame);

layoutInfo.childElements.forEach(childId => {

const child = figma.getNodeById(childId);

if (child) {

child.constraints = { horizontal: 'SCALE', vertical: 'SCALE' };

parentFrame.appendChild(child);

}

});Application Scenarios

Design Restoration

When designers need to recreate design drafts based on images provided by clients, AI conversion can significantly reduce manual operations.

Rapid Prototyping

During the rapid prototyping phase, designers can convert sketches or screenshots into Figma design drafts to accelerate the iteration process.

Design Iteration

When making modifications to existing designs, one can start directly from photos of the physical product, rather than designing from scratch.

Content Migration

Migrate content from paper documents or legacy websites into a new design framework.

Collaboration

Team members can share design ideas through physical images, and AI helps quickly convert them into a format for collaborative work.

Design System Integration

Convert existing design elements into Figma components to build or expand a design system.

Screenshot to Figma Design Plugins

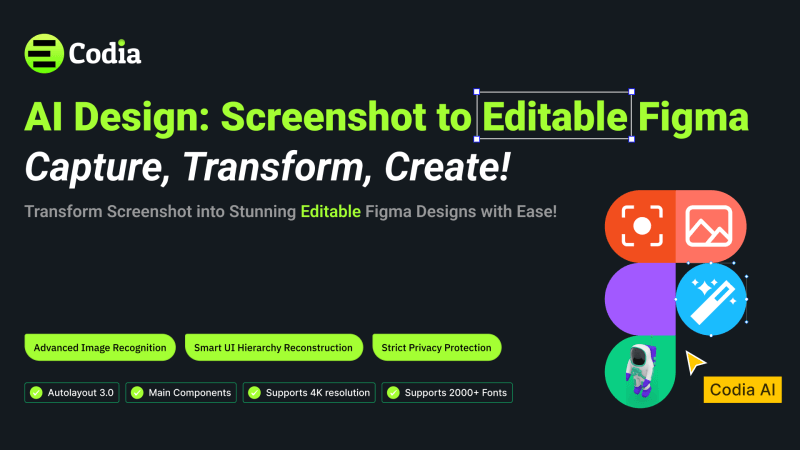

- Codia AI Design: This plugin Transform screenshots into editable Figma UI designs effortlessly. Simply upload a snapshot of an app or website, and let it do the rest. At the same time, Codia AI Code also supports Figma to Code, including Android, iOS, Flutter, HTML, CSS, React, Vue, etc., with high-fidelity code generation.

- Photopea: An integrated image editor that enables you to edit images within Figma and convert them into design elements.

Plugins for extracting design elements (such as colors, fonts, layout, etc.) from images:

- Image Palette – Extracts primary colors from an image and generates a color scheme.

- Image Tracer – Converts bitmap images into vector paths, allowing you to edit them in Figma.

- Unsplash – Search and insert high-quality, free images directly within Figma, great for quick prototyping.

- Content Reel – Fill your designs with real content (including images) to help designers create more realistic prototypes.

- PhotoSplash 2 – Another plugin for searching and using high-resolution photos within Figma.

- Figmify – Directly import images from the web into Figma, saving the time of downloading and uploading.

- TinyImage Compressor – Compresses images in Figma to optimize project performance.

- Remove.bg – A plugin that automatically removes the background of images, ideal for processing product photos or portraits.

- Pexels – Similar to Unsplash, this plugin offers a large collection of free-to-use image resources.

Blog

Blog